Node.js Gzipping July 28, 2011

Yesterday I lied when I said it would be the last Node.js post for a while! Oh well.

So today I was looking to make my project site a little faster, particularly on the mobile side. Actually this was the last three days worth of trying to figure stuff out. Node.js has plenty of compression library add-ons (modules), but the most standard compression tool out there is gzip (and gunzip). In the Accept-Encoding request header, the browser will tell you whether or not it can handle it. Most can...

This seemed like an obvious mechanism to employ to decrease some page load times... not that it's soo slow, but when the traffic gets up there and the site starts bogging down, at least the network will be less of a bottleneck. Some browsers do not support it, so you always have to send uncompressed content in those cases.

So I found a good Node.js compression module that supported BZ2 as well as gzip. The problem was, it was only meant to work with npm (Node's package manager), which for whatever reason, I've stayed away from. I like to keep my modules organized myself, I guess! So I pull the source from github and build the package if it requires it, then make sure I can use it by just calling require("package-name"); It's worked for every case except the first gzip library I found... doh! Luckily, github is a very social place, and lots of developers will just fork a project and fix it. That was where the magic started. I found a fork of the node-compress that fixed these issues, installed the package correctly by just calling ./build.sh (which calls node-waf, which I'm fine with using!), and copied the binary to the correct location within the module directory. So all I had to do was modify my code to require("node-compress/lib/compress"); I'm fine with that too.

Code - gzip.js

var compress = require("node-compress/lib/compress");

var encodeTypes = {"js":1,"css":1,"html":1};

function acceptsGzip(req){

var url = req.url;

var ext = url.indexOf(".") == -1 ? "" : url.substring(url.lastIndexOf(".")+1);

return (ext in encodeTypes || ext == "") && req.headers["accept-encoding"] != null && req.headers["accept-encoding"].indexOf("gzip") != -1;

}

function createBuffer(str, enc) {

enc = enc || 'utf8';

var len = Buffer.byteLength(str, enc);

var buf = new Buffer(len);

buf.write(str, enc, 0);

return buf;

}

this.gzipData = function(req, res, data, callback){

if (data != null && acceptsGzip(req)){

var gzip = new compress.Gzip();

var encoded = null;

var headers = null;

var buf = Buffer.isBuffer(data) ? data : createBuffer(data, "utf8");

gzip.write(buf, function(err, data1){

encoded = data1.toString("binary");

gzip.close(function(err, data2){

encoded = encoded + data2.toString("binary");

headers = { "Content-Encoding": "gzip" };

callback(encoded, "binary", headers);

});

});

}

else callback(data);

}

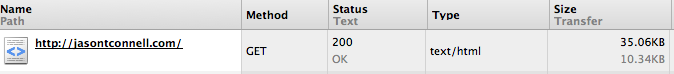

So it's awesome. Here's a picture of the code working on this page

Quite the improvement, at less than 30% of the size! Soon I'm going to work in a static file handler, so that it doesn't have to re-gzip js and css files every request, although I use caching extensively, so it won't have to re-gzip it for you 10 times in a row, only re-gzip it for 10 different users for the first time... I can see that being a problem in the long run, although, it's still fast as a mofo!